- Replication >

- Replica Set Tutorials >

- Replica Set Deployment Tutorials >

- Deploy a Geographically Redundant Replica Set

Deploy a Geographically Redundant Replica Set¶

On this page

This tutorial outlines the process for deploying a replica set with members in multiple locations. The tutorial addresses three-member sets and five-member replica sets. If you have an even number of replica set members, add an arbiter to deploy an odd number replica set.

For more information on distributed replica sets, see Replica Sets Distributed Across Two or More Data Centers. See also Replica Set Deployment Architectures and see Replication.

Overview¶

While replica sets provide basic protection against single-instance failure, replica sets whose members are all located in a single facility are susceptible to errors in that facility. Power outages, network interruptions, and natural disasters are all issues that can affect replica sets whose members are colocated. To protect against these classes of failures, deploy a replica set with one or more members in a geographically distinct facility or data center to provide redundancy.

Considerations¶

Each member of the replica set resides on its own machine and all of the MongoDB processes bind to port

27017(the standard MongoDB port).Each member of the replica set must be accessible by way of resolvable DNS or hostnames, as in the following scheme:

mongodb0.example.netmongodb1.example.netmongodb2.example.netmongodbn.example.net

You will need to either configure your DNS names appropriately, or set up your systems’

/etc/hostsfile to reflect this configuration.

Ensure that network traffic can pass between all members in the network securely and efficiently. Consider the following:

- Establish a virtual private network. Ensure that your network topology routes all traffic between members within a single site over the local area network.

- Configure authentication using

authandkeyFile, so that only servers and processes with authentication can connect to the replica set. - Configure networking and firewall rules so that only traffic

(incoming and outgoing packets) on the default MongoDB port (e.g.

27017) from within your deployment is permitted.

For more information on security and firewalls, see Inter-Process Authentication.

You must specify the run time configuration on each system in a configuration file stored in

/etc/mongodb.confor a related location. Do not specify the set’s configuration in themongoshell.Use the following configuration for each of your MongoDB instances. You should set values that are appropriate for your systems, as needed:

The

dbpathindicates where you wantmongodto store data files. Thedbpathmust exist before you startmongod. If it does not exist, create the directory and ensuremongodhas permission to read and write data to this path. For more information on permissions, see the security operations documentation.Modifying

bind_ipensures thatmongodwill only listen for connections from applications on the configured address.For more information about the run time options used above and other configuration options, see Configuration File Options.

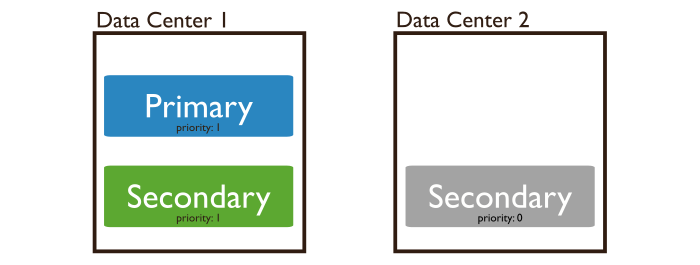

Distribution of the Members¶

If possible, use an odd number of data centers, and choose a distribution of members that maximizes the likelihood that even with a loss of a data center, the remaining replica set members can form a majority or at minimum, provide a copy of your data.

Voting Members¶

Never deploy more than seven voting members.

Procedures¶

Deploy a Geographically Redundant Three-Member Replica Set¶

For a geographically redundant three-member replica set deployment, you must decide how to distribute your system. Some possible distributions for the three members are:

- Across Three Data Centers: One members to each site.

- Across Two Data Centers: Two members to Site A and one member to Site B. If one of the members of the replica set is an arbiter, distribute the arbiter to Site A with a data-bearing member.

Start a

mongodinstance on each system that will be part of your replica set. Specify the same replica set name on each instance. For additionalmongodconfiguration options specific to replica sets, see Replication Options.Important

If your application connects to more than one replica set, each set should have a distinct name. Some drivers group replica set connections by replica set name.

If you use a configuration file, then start each

mongodinstance with a command that resembles the following:Change

/etc/mongodb.confto the location of your configuration file.Note

You will likely want to use and configure a control script to manage this process in production deployments. Control scripts are beyond the scope of this document.

Open a

mongoshell connected to one of the hosts by issuing the following command:Use

rs.initiate()to initiate a replica set consisting of the current member and using the default configuration, as follows:Display the current replica configuration:

The replica set configuration object resembles the following

In the

mongoshell connected to the primary, add the remaining members to the replica set usingrs.add()in themongoshell on the current primary (in this example,mongodb0.example.net). The commands should resemble the following:When complete, you should have a fully functional replica set. The new replica set will elect a primary.

Optional. Configure the member eligibility for becoming primary. In some cases, you may prefer that the members in one data center be elected primary before the members in the other data centers.

For example, to lower the relative eligibility of the the member located in one of the sites (in this example, mongodb2.example.net), set the member’s priority to 0.5.

Issue the following command to determine the

membersarray position for the member:In the

membersarray, save the position of the member whose priority you wish to change. The example in the next step assumes this value is2, for the third item in the list. You must record array position, not_id, as these ordinals will be different if you remove a member.In the

mongoshell connected to the replica set’s primary, issue a command sequence similar to the following:When the operations return,

mongodb2.example.nethas a priority of 0. It cannot become primary.Note

The

rs.reconfig()shell method can force the current primary to step down, causing an election. When the primary steps down, all clients will disconnect. This is the intended behavior. While most elections complete within a minute, always make sure any replica configuration changes occur during scheduled maintenance periods.

After these commands return, you have a geographically redundant three-member replica set.

Check the status of your replica set at any time with the

rs.status() operation.

See also

The documentation of the following shell functions for more information:

Refer to Replica Set Read and Write Semantics for a detailed explanation of read and write semantics in MongoDB.

Deploy a Geographically Redundant Five-Member Replica Set¶

For a geographically redundant five-member replica set deployment, you must decide how to distribute your system. Some possible distributions for the five members are:

- Across Three Data Centers: Two members in Site A, two members in Site B, one member in Site C.

- Across Four Data Centers: Two members in one site, and one member in the other three sites.

- Across Five Data Centers: One members in each site.

- Across Two Data Centers: Three members in Site A and two members in Site B.

To deploy a geographically redundant five-member set:¶

The following five-member replica set includes an arbiter.

Start a

mongodinstance on each system that will be part of your replica set. Specify the same replica set name on each instance. For additionalmongodconfiguration options specific to replica sets, see Replication Options.Important

If your application connects to more than one replica set, each set should have a distinct name. Some drivers group replica set connections by replica set name.

If you use a configuration file, then start each

mongodinstance with a command that resembles the following:Change

/etc/mongodb.confto the location of your configuration file.Note

You will likely want to use and configure a control script to manage this process in production deployments. Control scripts are beyond the scope of this document.

Open a

mongoshell connected to one of the hosts by issuing the following command:Use

rs.initiate()to initiate a replica set consisting of the current member and using the default configuration, as follows:Display the current replica configuration:

The replica set configuration object resembles the following

Add the remaining members to the replica set using

rs.add()in amongoshell connected to the current primary. The commands should resemble the following:When complete, you should have a fully functional replica set. The new replica set will elect a primary.

In the same shell session, issue the following command to add the arbiter (e.g.

mongodb4.example.net):Optional. Configure the member eligibility for becoming primary. In some cases, you may prefer that the members in one data center be elected primary before the members in the other data centers.

For example, to lower the relative eligibility of the the member located in one of the sites (in this example, mongodb2.example.net), set the member’s priority to 0.5.

Issue the following command to determine the

membersarray position for the member:In the

membersarray, save the position of the member whose priority you wish to change. The example in the next step assumes this value is2, for the third item in the list. You must record array position, not_id, as these ordinals will be different if you remove a member.In the

mongoshell connected to the replica set’s primary, issue a command sequence similar to the following:When the operations return,

mongodb2.example.nethas a priority of 0. It cannot become primary.Note

The

rs.reconfig()shell method can force the current primary to step down, causing an election. When the primary steps down, all clients will disconnect. This is the intended behavior. While most elections complete within a minute, always make sure any replica configuration changes occur during scheduled maintenance periods.

Check the status of your replica set at any time with the

rs.status() operation.

See also

The documentation of the following shell functions for more information:

Refer to Replica Set Read and Write Semantics for a detailed explanation of read and write semantics in MongoDB.